Ultimate Guide to A/B Testing for Rental Businesses

Run effective A/B tests for rental businesses: set goals, choose sample sizes, test emails/pricing/forms, and analyze results.

A/B testing helps rental businesses make better decisions by using real data instead of guesses. This method compares two versions of a webpage, email, or pricing strategy to see which performs better. For example, testing a shorter booking form could reduce abandonment rates and increase reservations.

Key takeaways:

- Why it matters: Small improvements, like a 0.5% increase in conversion rates, can lead to 25% more bookings without extra ad spend.

- Common mistakes: Ending tests too early or ignoring seasonality can lead to unreliable results.

- What to test: Email subject lines, pricing strategies, booking forms, and website features.

- How to succeed: Run tests for at least two weeks, focus on one change at a time, and aim for a 95% confidence level in results.

The ultimate guide to A/B testing | Ronny Kohavi (Airbnb, Microsoft, Amazon)

sbb-itb-eb44693

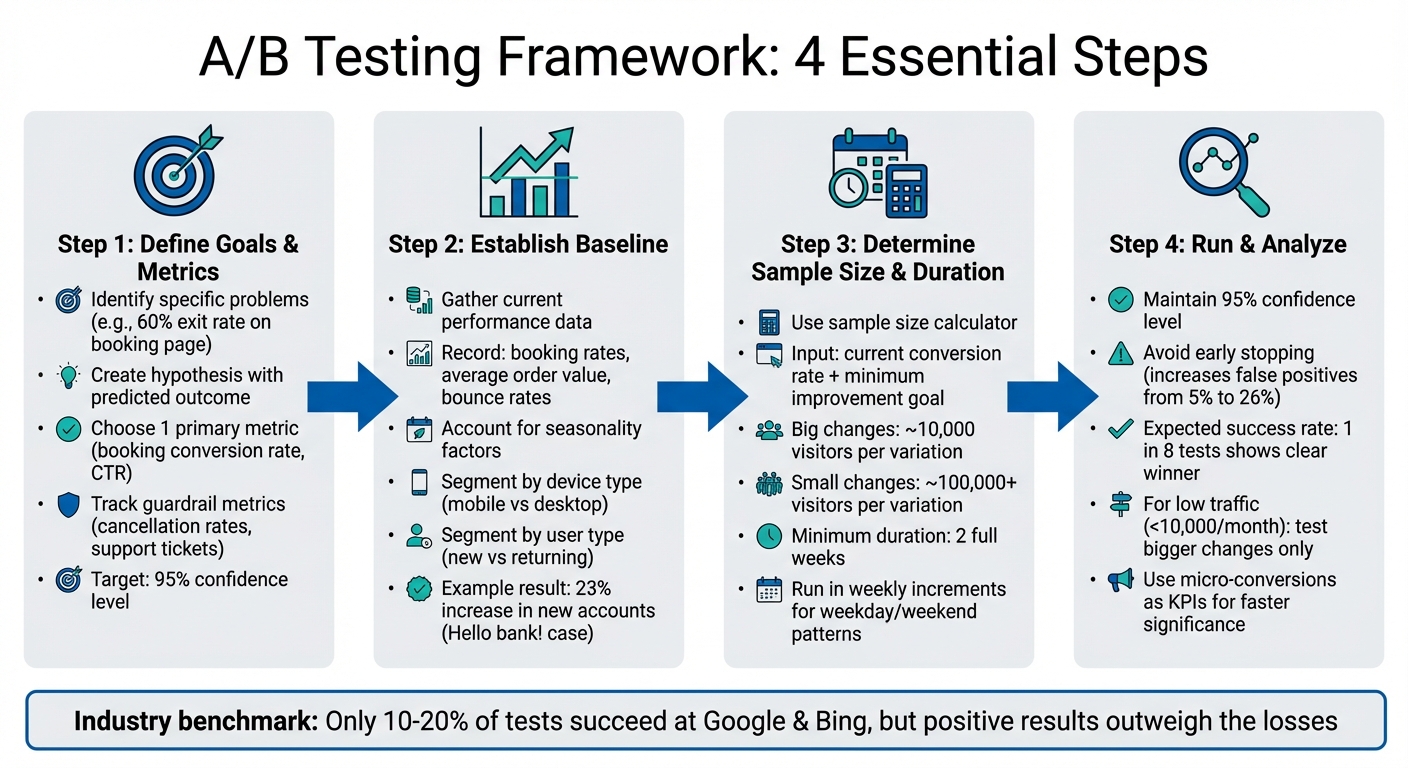

Setting Up Your A/B Testing Framework

A/B Testing Framework for Rental Businesses: 4-Step Process

Starting tests without a clear plan can waste both time and resources. Here's how to lay the groundwork for an effective A/B testing framework.

Defining Goals and Key Metrics

Start by identifying a specific issue in your data. For example, if your booking page has a 60% exit rate, that's a clear problem to address. Develop a hypothesis that offers a solution and predicts an outcome. For instance: "Reducing the booking form from eight fields to four will cut form abandonment by 15% because customers will find it less overwhelming".

Pick one primary metric to measure success - like booking conversion rate or click-through rate on a "Check Availability" button. Also, track guardrail metrics, such as cancellation rates or support tickets, to ensure improvements in one area don't lead to new problems elsewhere.

For rental businesses using automated tools like Lockii, monitoring metrics across the customer journey is critical. With no staff to assist confused customers, every digital interaction must work seamlessly. Stick to a 95% confidence level, the industry standard, meaning your test results should be reliable 19 out of 20 times. This approach ensures your testing framework is built for accurate measurement and analysis.

Establishing a Baseline Performance

Before testing, gather data on your current performance using analytics tools. Record metrics like booking rates, average order value, and bounce rates.

In 2021, Hello bank! (a subsidiary of BNP Paribas Group) assessed their account creation funnel to establish a baseline before testing. Digital Marketing Manager Justine Stevens and her team tested six landing page variations, which led to a 23% increase in new accounts.

Account for factors like seasonality and segment data by device type (mobile vs. desktop) and user type (new vs. returning). Behavior can vary significantly between these groups, and data from a busy summer period won’t reflect slower months like November.

Determining Sample Size and Duration

Your sample size depends on factors like your baseline conversion rate, the minimum improvement you aim to detect, and your desired confidence level. Use a sample size calculator to input your current conversion rate and the smallest meaningful improvement. Larger changes, such as a new booking flow, may need 10,000 visitors per variation, while smaller tweaks, like a button color change, could require 100,000 or more.

Tests should run for at least two full weeks, ideally in weekly increments, to account for weekday versus weekend behavior patterns. If your typical customer takes two weeks to decide before booking, extend the test period accordingly to capture those conversions.

"The average recommended A/B testing time is 2 weeks, but you should always identify key factors relevant to your own conversion goals." - AB Tasty

For rental businesses with lower traffic (less than 10,000 monthly visitors), focus on testing bigger changes - like headlines or entire page layouts - rather than minor elements. Use micro-conversions, such as "add to cart" or "start booking", as your primary KPI to reach statistical significance faster. Avoid ending tests early, as this can increase false positive rates from 5% to as high as 26%.

With these steps in place, you're ready to move on to designing and running impactful tests in the next phase.

What to Test in Rental Business Communications

When it comes to improving rental business communications, focus on areas that can deliver measurable improvements or automate rental business operations to increase efficiency. By building on an A/B testing framework, you can evaluate specific elements in messaging, pricing, and website performance to drive more bookings.

Email and SMS Campaign Elements

Subject lines are your first shot at grabbing attention. Test personal touches like including the recipient's name or referencing the item they browsed. Keep an eye on length, too - subject lines around seven words tend to hit open rates of 30%. You can also experiment with tone, comparing urgency ("Last chance to book") against a more casual approach to see what resonates.

Your call-to-action (CTA) wording is another critical area to test. For instance, in January 2025, Going, a travel company, replaced "Sign up for free" with "Trial for free", leading to a 104% increase in trial starts. While tweaking button colors or sizes can help, focusing on the actual CTA text often delivers quicker results, especially for rental businesses with smaller traffic volumes.

Timing matters when sending messages. Test different days and times to align with customer habits. For example, Cargo Crew ran several A/B tests on their post-purchase email flow, testing subject lines and timing. The result? A 3.5x increase in revenue per recipient.

For SMS campaigns, try comparing plain SMS to MMS with images. In 2024, Fast Growing Trees discovered that plain SMS outperformed MMS, delivering 10x the expected click rate at just one-third of the cost. This approach contributed to a 231.7% quarter-over-quarter increase in SMS click rates.

Booking and Pricing Strategies

Messaging isn't the only thing to test - pricing and form design play a big role in customer decisions. When testing pricing, focus on emphasizing value rather than just changing numbers. Highlight what makes your rental unique, such as instant booking confirmation or 24/7 availability.

Measure total revenue instead of just conversion rates when testing prices. While a lower price might boost bookings, it could hurt overall profitability. According to McKinsey & Company, even a 1% improvement in pricing strategy can lead to an 11–12% increase in profits.

Testing incentives can also provide insight. For example, Brava Fabrics compared a 10% discount with a "chance to win a $300 gift card" on their sign-up forms. Both performed equally well, so they opted for the gift card model to protect profit margins. Rental businesses could try similar tests, like offering "$20 off rentals over $100" versus "10% off your first rental."

Form complexity is another area worth testing. In October 2024, Caden Lane ran 30 versions of their pop-up form and found that asking visitors for more context - like whether they were shopping for a newborn or older child - led to a 157.3% year-over-year growth in attributed revenue. For rental businesses, asking a simple question like "What are you renting for?" could provide actionable insights without adding too much friction.

Website and Widget Performance

Your booking widget can make or break conversions. Test its placement (e.g., sidebar vs. top-of-page) and behavior (auto-expanding vs. click-to-open). For tools like Lockii, you can also test when the widget appears - immediately or after 20 seconds on the page - to see what drives more engagement.

Navigation flow should guide users smoothly toward booking. In 2025, Grene, an agriculture eCommerce brand, revamped its mini cart by adding a CTA at the top, enlarging the "Go To Cart" button, and including a "remove" option for items. Over 36 days, these changes doubled the total purchased quantity and improved their conversion rate from 1.83% to 1.96%.

Simplify your forms to reduce friction. PayU, a fintech company, removed the email address field from their checkout and only required a mobile number. This small change boosted conversions by 5.8%. For rental businesses, consider whether all eight fields in your booking form are necessary upfront or if some details can be collected later.

Finally, test social proof placement to build trust without distracting users. WorkZone, a project management software company, changed customer testimonial logos from color to black and white on their lead generation page. This subtle tweak led to a 34% increase in form submissions over 22 days, with 99% statistical significance.

Running and Analyzing Your A/B Tests

Designing and Running Tests

Start by crafting a clear hypothesis that connects a specific problem with a proposed solution. For instance, you might hypothesize that switching the "Book Now" button from blue to orange will lead to more rental inquiries.

Ensure traffic is randomized evenly between test variations, and exclude any outliers that could skew your results. Most testing tools handle traffic splitting automatically, so the only difference between groups is the element you're testing. Once you've identified the winning variation, promptly remove the code for the losing version to keep your site running smoothly.

Be cautious about external factors that could interfere with your test. For example, if a marketing campaign drives traffic exclusively to one variation, it introduces bias and undermines the reliability of your data. By designing tests carefully and maintaining controlled conditions, you can move on to verifying your results with proper statistical analysis.

Measuring Statistical Significance

Aim for a 95% confidence level to ensure your results are not due to chance. To determine the sample size you need, consider your current conversion rate and the smallest improvement you’d like to detect.

Run your tests for at least two full weeks to account for differences in weekday and weekend user behavior. Interestingly, only about one in eight tests results in a clear, statistically significant winner. This is entirely normal - even companies like Google and Bing see positive outcomes in just 10–20% of their tests. If your site sees fewer than 10,000 visitors a month, focus on testing major elements like headlines or page layouts instead of smaller details like button colors.

Avoiding Common Analysis Mistakes

Resist the urge to "peek" at your results daily. Stopping a test as soon as you see a p-value of 0.05 increases the risk of false positives. As Jonathan Fulton points out, p-values can be misleading and don’t reflect the size of the effect.

Keep an eye out for sample ratio mismatches. If your test is supposed to split traffic evenly but ends up with a 55/45 split, it could indicate a data assignment issue. Many tools can automatically flag these problems, helping you maintain reliable data.

Be aware of the novelty effect, where initial excitement about a change temporarily boosts results. To get a clearer picture of your changes’ lasting impact, segment your analysis by new versus returning users. Also, avoid running tests during holidays or peak rental seasons when traffic patterns might be unusual.

Finally, stick to testing one element at a time. This approach makes it easier to identify what’s driving the changes in user behavior.

Applying Results and Building a Testing Process

Rolling Out Winning Variations

Once you've pinpointed a winning variation, roll it out across similar campaigns or pages to amplify its impact. Tools with auto-winner features can help by deploying top-performing variations quickly - often within just 2–4 hours.

Take note of the elements that made the variation successful, whether it's a specific call-to-action, an image style, or a particular tone in messaging. Incorporate these elements into future campaigns to build on prior successes. This approach creates a snowball effect, where each success lays the groundwork for the next. For example, if testing shows that optimizing send times can increase click-through rates by 8% and conversion rates by 33%, make those send times a standard part of your process.

Be sure to document these rollouts thoroughly, as this will help refine your testing strategies moving forward.

Documenting Results and Planning Future Tests

Create a standardized template for documenting tests. This should include key details like the hypothesis, target audience, screenshots of variations, final results with confidence intervals, and a section for insights and next steps. Store this information in a centralized, searchable repository - such as a shared document, a Notion database, or an internal wiki - tagged with relevant keywords like "pricing", "checkout", or "booking flow".

Don’t just document your successes - record your failures too. Since only about 33% of A/B tests lead to measurable improvements, understanding what didn’t work can prevent future teams from repeating the same mistakes. These failures also help prioritize future experiments. To decide which ideas to test next, use a framework like ICE (Impact, Confidence, Ease) to rank experiments and focus resources on the most promising opportunities.

Building a Continuous Testing Process

Use the insights from your documented tests as a foundation for ongoing improvements. Schedule quarterly reviews to identify trends across multiple tests, which can guide your strategic planning and roadmap priorities. When a test succeeds, treat the winning variation as the new baseline for your next experiment, creating a cycle of continuous improvement.

For businesses with limited resources, consider a lean approach to testing. Focus on minimal viable features to validate demand before committing to full-scale development. For instance, the StaySense vacation rental team added a prototype button to gauge interest in a user-generated content feature. By tracking clicks without building any backend functionality, they discovered demand was lower than expected and adjusted their plans, saving valuable development time.

After implementing a winning variation, monitor key metrics for 2–4 weeks to ensure the gains are sustainable and not just a temporary spike. Pair your quantitative A/B test results with qualitative insights from customer feedback surveys, heatmaps, or support tickets. This combination helps you understand the reasons behind behavior changes, leading to better hypotheses for future tests.

"A/B testing is not just for immediate optimization. It's also for informing future marketing decisions based on precise audience preferences and behaviors." - Ricky Hagen, Retention Marketing Manager at JAXXON

Conclusion: Growing Your Rental Business Through A/B Testing

A/B testing transforms how rental businesses make decisions. Instead of relying on intuition or gut feelings, it provides clear, measurable data to show what actually works. This shift from guessing to knowing eliminates unnecessary risks and ensures resources are used wisely.

The real advantage lies in steady, incremental growth. While a single test might bring a small improvement, running 10–15 tests a year allows these small wins to add up. Over time, these gains can significantly increase conversion rates without requiring a higher advertising budget. Even major players like Google and Bing find that only 10–20% of their tests succeed, but the positive results more than make up for the losses.

For rental businesses, testing is a practical way to make smarter decisions with limited resources. It allows you to test demand before committing to big investments in new features or services. By gauging customer interest early, you can allocate your budget to ideas that show real potential. This "fail fast" approach minimizes risks, helping you avoid launching pricing strategies or booking systems that could hurt your revenue or customer satisfaction.

Platforms like Lockii make it easier to adopt this testing mindset. With tools like SMS and email automation, embeddable booking widgets, and self-service portals, you can efficiently experiment with communication strategies and booking processes. These automated features handle growing customer demand, so you can focus on understanding what drives conversions. As your business expands to multiple locations, the insights gained from testing become even more critical for maintaining consistent performance.

Starting with small, regular tests gives you a competitive advantage - something many businesses still overlook. By making A/B testing a core part of your strategy, you create a cycle of continuous improvement that fuels long-term growth for your rental business.

FAQs

What’s the best first A/B test for a low-traffic rental website?

For rental websites with lower traffic, begin with straightforward A/B tests targeting elements that influence user engagement the most. For example, try testing the call-to-action (CTA) button or the headline on your homepage or booking page. Keep it simple - experiment with one element at a time. You could compare CTA texts like "Book Now" versus "Reserve Your Rental" to see which resonates more with visitors. These focused tests can still yield meaningful insights, even with a smaller audience.

How do I choose the right success metric for a rental A/B test?

To pick the right metric for measuring success in a rental A/B test, start by identifying the specific outcome you’re aiming to enhance. Common metrics include:

- Conversion rates, such as the percentage of completed bookings.

- Customer engagement, like the amount of time users spend on booking pages.

- Operational efficiency, such as reducing booking errors.

The key is to select a metric that aligns closely with your objectives and is sensitive enough to detect meaningful changes in your rental process.

How can I avoid seasonality skewing my A/B test results?

To ensure your A/B test results are accurate and unaffected by seasonal quirks, it's crucial to run tests during steady periods, avoiding times of major seasonal shifts. Events like holidays, weather changes, or evolving industry trends can significantly impact customer behavior on their own, creating data that doesn’t reflect your test variables. By choosing periods of consistent demand, you can better isolate the effects of your test. This is especially important for rental businesses, where bookings often see natural ups and downs throughout the year.